McKinsey Solve Assessment (2026 Guide)

The complete guide to McKinsey's digital assessment. What the games test, how to prepare, and what actually matters.

Key Takeaways

- →Solve tests cognitive abilities, not business knowledge—you can't study "content"

- →Red Rock Study (data analysis) is the core module—mental math fluency helps here

- →Take it when mentally fresh (morning, well-rested)—cognitive performance drops when tired

- →You cannot retake for 12 months—one shot matters

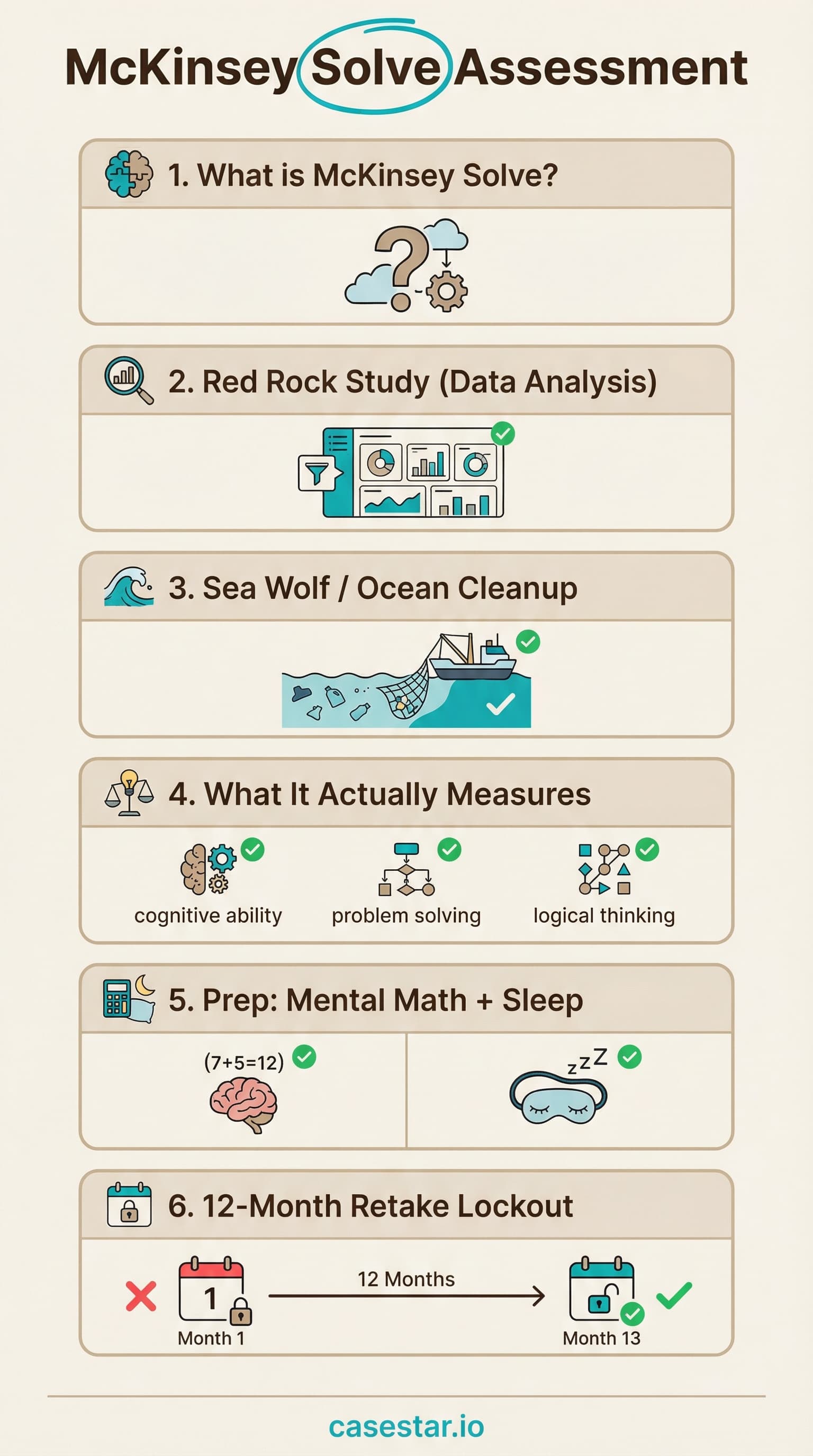

What is McKinsey Solve?

McKinsey Solve (formerly the Problem Solving Game or Imbellus assessment) is a gamified digital assessment that McKinsey uses to screen candidates before interviews. You complete it online, from home, after passing the resume screen.

Unlike traditional aptitude tests with multiple-choice questions, Solve uses interactive game-like scenarios to measure how you think. McKinsey designed it specifically to be difficult to "prep" for in traditional ways.

Quick Facts

Duration

60-70 minutes (currently 2 modules: Sea Wolf ~30 + Redrock ~35)

Format

Online, from home, browser-based

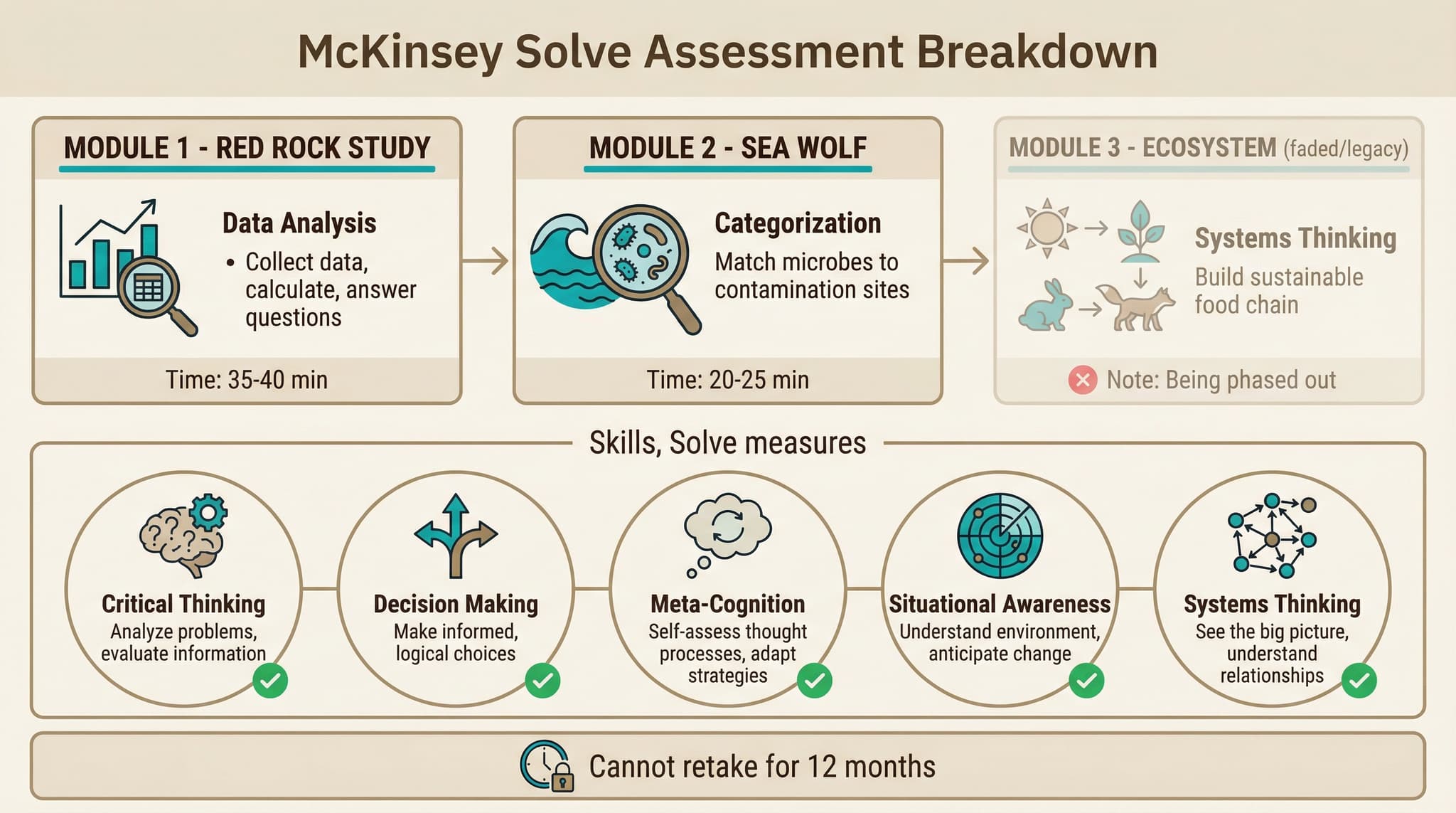

Retake Policy

Cannot retake for 12 months

When

After resume screen, before interviews

What Solve Actually Measures

McKinsey has stated that Solve measures five core problem-solving dimensions. Understanding these helps you know what behaviors the games are looking for.

Critical Thinking

Can you evaluate information objectively, identify what's relevant, and draw logical conclusions? The games present data and require you to separate signal from noise.

Decision Making

Can you make sound decisions with incomplete information and under time pressure? You won't have all the data you want—you must decide anyway.

Meta-Cognition

Are you aware of your own thinking process? Do you recognize when you're uncertain and adjust? The games track how you explore options and revise your approach.

Situational Awareness

Can you understand complex systems and anticipate how changes ripple through them? Especially relevant in ecosystem-style games.

Systems Thinking

Can you see how parts connect to form a whole? Understand interdependencies? The games require optimizing across multiple variables simultaneously.

Key insight: None of these are about business knowledge or case frameworks. Solve tests raw cognitive abilities. Someone who has never heard of consulting can score well if they think clearly under pressure.

The Assessment Modules (2026)

McKinsey regularly updates Solve. As of 2026, most candidates receive two modules — Sea Wolf and Redrock Study. McKinsey is reportedly piloting a third behavioral module, Sustainable Future Lab, in select offices and cohorts starting in early 2026. The specific combination varies by office and candidate pool.

Red Rock Study (Data Analysis)

You play a researcher investigating a mystery (like disease spread or species decline). You must collect data, perform calculations, and answer questions about what the data shows.

What happens:

- You're given a research question to investigate

- You choose which data sources to examine (limited "budget")

- Data appears as charts, tables, or maps

- You must answer multiple-choice questions about findings

- Some questions require calculations (percentages, ratios, comparisons)

What it tests:

- Data interpretation speed

- Prioritization (which data to gather first)

- Mental math fluency

- Pattern recognition in data

Prep tip: This is where mental math practice actually helps. Being able to quickly calculate "what's 23% of 847?" saves time and reduces errors.

Sea Wolf / Ocean Cleanup

You must clean up ocean sites by selecting the right microbes. Each microbe has characteristics that make it effective for certain contaminants. You categorize and deploy them strategically.

What happens:

- Multiple ocean sites need cleanup

- Each site has specific contamination profiles

- You choose microbes based on their properties

- Time-limited decisions required

What it tests:

- Rapid categorization

- Rule application under pressure

- Decision accuracy vs. speed tradeoff

Sustainable Future Lab

McKinsey is reportedly piloting Sustainable Future Lab in early 2026 as a third Solve module focused on behavioral decision-making. Candidates are placed inside a scenario-style simulation (often framed around environmental or sustainability themes) and asked to make a sequence of judgment calls as the situation evolves. Early reports from select offices describe a drag-and-drop prioritization step followed by scenario-based multiple-choice decisions.

What's being reported:

- Introduced in limited cohorts starting March-April 2026

- Availability varies by office and recruiting cycle — verify with your recruiter

- Candidates who receive it have described invitations noting a longer total assessment window

- Focus is behavioral / judgment-based rather than quantitative

What it appears to test:

- Prioritization under ambiguity

- Trade-off reasoning and stakeholder judgment

- Interpreting messy or incomplete information

Note: Sustainable Future Lab does not replace Sea Wolf or Redrock — where it appears, it is reportedly an additional module layered on top. Because it is still a pilot, exact duration and scoring details are not yet consistently documented. Treat all specifics as provisional and confirm with your recruiter if your invitation references a third module.

Ecosystem Building

The original Solve module. You build a sustainable ecosystem by placing species on a terrain. Each species has food requirements and predator/prey relationships. Being phased out but may still appear in some tracks.

What it tests:

- Systems thinking (food chain balance)

- Spatial reasoning (terrain placement)

- Constraint satisfaction (calorie requirements)

Note: This module is being phased out globally as of 2025-2026. Most candidates will not receive it, but some recruitment tracks may still include it.

Preparation Strategies

The Uncomfortable Truth

McKinsey intentionally designed Solve to resist traditional preparation. Unlike the PST (the old multiple-choice test), you cannot buy a prep book and grind through practice questions. The games measure underlying cognitive abilities that develop over years, not weeks.

What Actually Helps

1. Mental Math Fluency

The Red Rock module requires quick calculations. If you spend 30 seconds on every percentage calculation, you'll run out of time.

How to build it: Daily mental math drills for 15-20 minutes over 2-4 weeks. Focus on percentages, ratios, and large number division.

2. Data Interpretation Practice

Get comfortable reading charts and tables quickly. Practice extracting the key insight in under 30 seconds.

How to build it:Read Financial Times or Economist charts daily. For each chart, ask: "What's the trend? What's the anomaly? What's the implication?"

3. Cognitive Training Apps

Brain training apps develop the underlying skills Solve measures. They're not direct prep but build relevant cognitive capacities.

Options: Lumosity, Elevate, Peak, Fit Brains. 15-20 minutes daily for 2-4 weeks before your test.

4. Sleep and Timing

Cognitive performance drops significantly when tired. This is probably the highest-impact "prep" you can do.

Action:Take the test in the morning after 8+ hours of sleep. Don't schedule it after a long work day. Find a quiet space with no interruptions.

What Doesn't Help

- Paid "Solve prep" courses: Most are scams selling generic brain games at premium prices

- Trying to "game" the system: McKinsey tracks behavioral patterns, not just answers

- Memorizing "strategies": The games adapt and each instance is different

- Cramming the night before:Cognitive abilities don't improve overnight

Common Mistakes

Taking It While Tired

Many candidates schedule Solve after work or late at night. Cognitive performance drops 20-40% when fatigued. This is avoidable.

Rushing Without Reading

The games have instructions. Candidates who skip them make preventable mistakes. Take 30 seconds to understand what you're being asked.

Perfectionism

Some candidates spend too long optimizing each answer. Time management matters. A good answer submitted is better than a perfect answer you ran out of time for.

Technical Issues

Unstable internet, notifications popping up, roommates interrupting. Test your setup beforehand. Use a quiet space with reliable wifi.

Over-Preparing Strategy

Reading 10 articles about "how to beat Solve" creates anxiety and false expectations. The games are designed to be novel. Accept that.

Ignoring Time Warnings

The games show time remaining. Some candidates get absorbed and don't notice. Pace yourself and check periodically.

Day of the Test

Pre-Test Checklist

- 18+ hours of sleep the night before. Non-negotiable.

- 2Morning timing if possible. Most people are sharpest 2-4 hours after waking.

- 3Quiet space with no interruptions. Tell roommates/family not to disturb you.

- 4Test your setup - reliable internet, charged laptop, working browser.

- 5Light meal beforehand - not hungry, not stuffed. Brain needs glucose.

- 6Disable notifications on your computer. Put phone in another room.

What Happens After

After completing Solve, McKinsey's algorithm analyzes your results. You will not receive a score or detailed feedback. You'll simply hear whether you're moving forward to interviews or not.

Timeline

- Results: Usually within 1-2 weeks, sometimes faster

- If you pass:You'll be invited to first-round interviews

- If you don't pass: You cannot reapply for 12 months (and your Solve results may be reused)

Important: Solve results are shared across McKinsey offices. If you apply to multiple offices, you only take Solve once. The results follow your application.

Solve vs Other Firm Assessments

| Aspect | McKinsey Solve | BCG Casey | Bain SOVA |

|---|---|---|---|

| Format | Gamified simulations | AI chatbot case study | Aptitude + video |

| Duration | 60-70 min | 25-30 min | 45-60 min |

| Tests | Cognitive abilities | Case solving skills | Aptitude + personality |

| Prep approach | Mental math, rest | Case practice | Practice tests |

Build Your Mental Math Speed

Mental math fluency is one of the few things that actually helps with Solve. Practice with CaseStar's timed drills.

Start Mental Math DrillsRelated: McKinsey's New AI Interview (Lilli)

Separate from Solve, McKinsey has reportedly begun piloting an AI-assisted case interview using Lilli, its proprietary internal AI assistant. Coverage in late 2025 / early 2026 describes it as a non-evaluative pilot running in select US final rounds — meaning the interview is layered on top of the standard case and PEI, and reporting so far suggests it does not directly drive the hire decision.

What's been reported:

- Lilli is McKinsey's internal AI assistant, now surfaced as a case-interview simulator in a pilot setting

- Running at a limited set of US offices, primarily in final rounds

- Candidates interact with Lilli to explore a business scenario and build a structured recommendation

- Currently described as non-evaluative — scoring is reportedly not tied to the hire decision

- Do not confuse this with Solve — Lilli is a separate, interview-style assessment, not a Solve module

How to think about it: If your recruiter mentions a Lilli-based interview, treat it like a normal case — define the problem, structure your approach, interrogate the outputs you get back, and synthesize a clear recommendation. The public reporting suggests McKinsey is testing how candidates collaborate with AI, not testing prompt-engineering skill. Confirm scope and weighting with your recruiter, since the pilot is still evolving.

Related Guides

Save This Guide

Download this infographic for quick reference before your Solve assessment.

Download Infographic